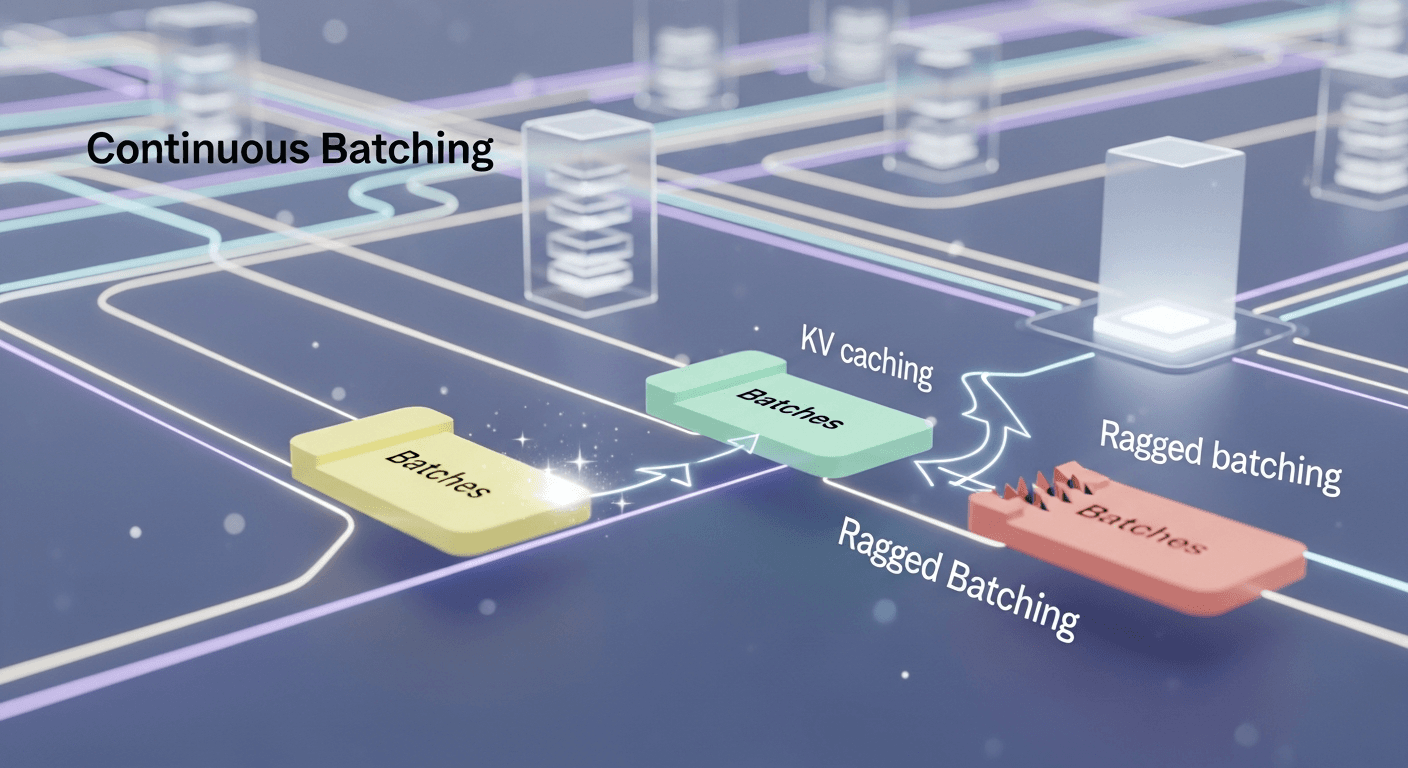

Understanding Continuous Batching: How Modern LLMs Maintain Speed Under Load

Introduction

Have you ever noticed how a chatbot types out answers? There are two key phases: you initially wait for the first token to appear, followed by a steady stream of tokens. Behind this streamlined experience lies a technique that keeps GPUs busy as many users interact at once: continuous batching. In this guide, we will break down this concept from the ground up, covering the importance of attention and KV caches, the inefficiencies of naive batching, and how continuous batching benefits large language model (LLM) serving.

What You Will Learn

- The role of attention in driving the compute costs of LLMs

- How KV caching transforms costly recomputation into efficient reuse

- The drawbacks of fixed-size batching due to padding

- The advantages of ragged batching and dynamic scheduling that eliminate waste

- How continuous batching combines prefill and decode to optimize throughput

- Practical steps to implement continuous batching in engines like Hugging Face TGI and vLLM

A Quick Note on Sources and Scope

This article draws from the original Hugging Face blog post published on November 25, 2025, which explains continuous batching from first principles. We also incorporate insights from open references such as TGI documentation, the vLLM PagedAttention paper, and NVIDIA’s guidance on in-flight batching.

From Attention to Throughput: The Building Blocks

Tokens and Attention at a Glance

- LLMs work with tokens (subword units) instead of raw characters or whole words.

- Each token traverses multiple layers. Most operations are token-wise, except for attention.

- Attention is where tokens interact: queries (Q) assess keys (K) to retrieve values (V).

- For a prompt of length n, the computation of attention scores requires about n^2 operations. This quadratic behavior makes longer prompts costly.

Prefill vs. Decode

- Prefill: In this initial heavy pass, the model analyzes the entire prompt to predict the next token, necessitating attention on all n tokens.

- Decode: Once the first token appears, generation continues one token at a time. Each token review considers all previous tokens, but with KV caching, we can reuse prior computations.

KV Caching: Reuse Instead of Recompute

During the prefill stage, the model computes K and V for each token. Storing these tensors ensures the model doesn’t need to recompute them for each new token during decoding. This Key-Value cache (KV cache) optimizes performance, reducing per-step complexity from approximately O(n^2) to O(n) lookups, plus computations for the new token. However, the trade-off is memory usage that scales with the number of prior tokens.

- Practical Insight: Without a cache, computing a new token would mean recalculating every previous token’s K and V. With a cache, these calculations are done once and reused.

- Typical Size: The KV cache size increases with the number of layers and heads, which is why serving longer contexts or handling many concurrent requests can stretch GPU memory limits, even when computational demands are modest.

Chunked Prefill

Large prompts may not fit entirely into GPU memory alongside necessary space for decoding and other requests. Chunked prefill processes the prompt in segments, appending each segment’s K and V to the cache before moving on. This approach achieves the same results as processing the entire prompt in one go while granting control over the memory footprint at each step, which is crucial for mixing prefill and decode tasks later on.

Why Naive Batching Leaves Performance on the Table

Classic Static Batching

Batching is a straightforward method to enhance throughput by processing multiple prompts in one forward pass. To create a rectangular tensor, shorter prompts are padded to match the length of the longest prompt, with an attention mask ensuring padded tokens don’t influence real tokens.

The Problem with Padding

- Waste Scales with Variability: When one request is significantly longer than others, the computational effort on padding can exceed that on actual tokens.

- Waste Persists Over Time: During decoding, some prompts may finish early (issuing an

token) while others continue. A fixed batch shape means continued calculations for completed rows unless reshuffling occurs.

Dynamic Batching: A Small Improvement

Dynamic batching (or dynamic scheduling) improves upon static batching by replacing completed requests with waiting ones, thereby avoiding unnecessary computation. However, relying on rectangular tensors still necessitates padding for any newly added requests, potentially amplifying padding overhead in batches with lengthy prompts.

Ragged Batching: Moving Beyond Rectangular Constraints

By eliminating the explicit batch dimension and concatenating tokens from different requests into a single long sequence, we can employ attention masks to ensure tokens from individual requests do not interfere with one another. This is ragged batching: one packed token array plus a carefully constructed mask eliminates the need for padding.

- Benefit: You only compute on actual tokens, avoiding wasted operations on padding.

- Constraint: The total number of tokens in the packed batch is limited by available memory at any given moment.

Putting It All Together: What Continuous Batching Really Means

Continuous batching integrates three fundamental concepts to keep GPUs fully utilized while handling requests that start and finish at varied times:

- KV Caching

- Prevents the recomputation of K and V for past tokens during decoding.

-

Frees up computation resources for greater concurrent request handling.

-

Chunked Prefill

- Allows the division of long prompts into memory-friendly segments.

-

Permits weaving prefill efforts around ongoing decode work.

-

Ragged Batching with Dynamic Scheduling

- Combines tokens from multiple requests (both prefill and decode) into a single attention pass.

- Efficiently swaps in the next chunk from the queue as soon as a request finishes or a chunk completes.

The Serving Loop at a High Level

- Maintain two separate queues: a decode queue (for one-token steps) and a prefill queue (for chunks of tokens).

- In each iteration, create a ragged batch that maximizes your GPU memory budget M tokens:

- First, fill the batch with as many 1-token decode steps as possible (small but latency-sensitive).

- Next, allocate remaining memory for one or more prefill chunks.

- Execute a single forward pass with a mask that isolates each request.

- Emit new tokens for the decode requests, extend their caches, and move them back to the decode queue unless they are complete.

- Mark prefill chunks as complete, extend their caches, and queue corresponding requests for further prefill or decode once done.

- Repeat. The batch continuously evolves as requests are processed.

Why This Approach Excels

- No Padding Waste: Ensures no computation on padded tokens.

- High Utilization: Nearly constant work keeps the GPU engaged.

- Flexible Latency/Throughput Trade-offs: Adjust packing policy and chunk sizes to prioritize either decode steps (for user interactivity) or prefill chunks (for overall throughput).

How Engines Implement Continuous Batching

Hugging Face Text Generation Inference (TGI)

TGI includes continuous batching as a core feature, augmented by optimizations such as Flash Attention, PagedAttention-based KV memory management, streaming capabilities, and robust observability. This combination enhances both throughput and deployability with open models.

vLLM and PagedAttention

The vLLM project popularized PagedAttention, treating KV cache similarly to an operating system’s paged virtual memory. This method reduces fragmentation and enables flexible sharing, leading to larger effective batch sizes and improved throughput. Earlier reports indicated 2-4x throughput improvements versus prior benchmarks, particularly for longer sequences. Many serving stacks have adopted similar concepts since.

Evolving Memory Management Strategies

Research continues to refine KV memory approaches. Alternatives like vAttention seek to capitalize on system-level demand paging to prevent rewriting attention kernels, while newer works explore structured eviction strategies on paged layouts. The overarching aim remains: boost capacity and throughput without compromising latency.

Encoder-Decoder Models and In-Flight Batching

Most examples thus far have emphasized decoder-only LLMs. Encoder-decoder architectures, which include certain speech, translation, or multimodal models, introduce cross-attention and multiple execution stages. NVIDIA labels this serving technique as in-flight batching, extending the principles of continuous batching while managing both self-attention and cross-attention KV caches. The guiding idea is consistent: continuously provide the GPU with meaningful tokens as requests flow in and out.

A Gentle, Visual Mental Model

Envision your server as a token conveyor belt:

- Decode tokens are small packages that require speedy delivery to keep the cursor moving.

- Prefill chunks are larger boxes that can be divided across multiple trips.

- The scheduler maintains the belt’s weight limit M by smartly mixing small and large packages without adding empty boxes (padding).

- Attention masks ensure packages do not intermingle.

Key Trade-Offs to Manage

- Latency vs. Throughput: Prioritize decode tokens to achieve quick interactivity, or focus on prefill chunk size and density for aggregate throughput.

- Memory vs. Speed: Larger caches support longer contexts and greater concurrency but consume GPU memory; memory managers like PagedAttention help pack more requests into the same RAM.

- Stability vs. Adaptability: Static shapes can assist compiler optimizations (e.g., CUDA graphs, compile-time scheduling). Ragged batches vary in shape; engines can address this through internal buffering or page-based caches.

Implementation Tips and Pitfalls

- Choose engines like TGI or vLLM that support continuous or in-flight batching out of the box, ensuring active community support and production deployment.

- Carefully tune your memory budget: establish the maximum tokens per step based on available GPU RAM, accounting for model weights, optimizer headroom, and telemetry buffers.

- Use separate queues for decode and prefill requests: treat decode tokens as high priority and prefill segments as fillers for unused capacity.

- Dynamically adjust chunk sizes: during heavy decoding, keep prefill chunks smaller to prevent stalls; during lighter decoding, increase chunk sizes for efficiency.

- Monitor long-tail distributions: given request length frequency, consider max input token limits to maintain effective batching. TGI offers such limits in its configuration.

- Be cautious of fragmentation: without a page-like KV layout, caches may fragment and shrink practical batch size. PagedAttention-style allocators can help address this.

- For encoder-decoder models, be ready for dual-paged caches to handle self- and cross-attention states effectively.

Worked Example: A Simple Scheduler Policy

Here’s a straightforward policy that you can adapt; real engines use far more intricate logic and backpressure.

- Inputs:

max_tokens_per_step = M; queuesDecodeQ, PrefillQ - Step 1: Pack decode tasks first

- While

Mhas room andDecodeQis not empty, pop requestr - Add

r.next_tokento the batch (cost ~1 token) - Reserve its KV growth in the memory model

- Step 2: Fill with prefill chunks

- While space remains and

PrefillQis not empty, pop requestp - Choose chunk length

csuch thatremaining_space - cstays non-negative and maintains a small buffer for late-arriving decode tasks - Add

p[c]tokens to the batch and update reservations - Step 3: Execute one forward pass with a ragged mask

- Step 4: Post-process

- Append generated tokens to each decode request; requeue if not finished

- Append KV for each prefill chunk; if the prompt is fully processed, move that request to

DecodeQ, else requeue for more prefill - Repeat

Frequently Asked Questions

1) Does continuous batching always reduce latency for a single user?

Not necessarily. Continuous batching focuses on maximizing overall throughput across concurrent requests. In low-traffic scenarios, the added batching logic may introduce a slight scheduling delay. Systems counter this by prioritizing decode steps, launching quickly when there’s sufficient work in the batch.

2) How is continuous batching different from dynamic batching?

Dynamic batching combines requests arriving simultaneously into a padded batch, creating rectangular tensors. Continuous batching advances this by employing ragged packing and attention masks, allowing a seamless integration of prefill and decode tasks while optimizing for continuous GPU utilization.

3) Why is PagedAttention frequently associated with continuous batching?

Continuous batching benefits from maintaining numerous concurrent requests, necessitating expansive KV caches. PagedAttention minimizes KV memory waste and fragmentation, enabling more requests and enhancing throughput. Its original findings noted up to 2-4x throughput enhancements compared to historical baselines, although actual results vary based on model, hardware, and workload.

4) Can this method be applied to encoder-decoder models as well?

Yes, but with modifications. Management of both self-attention and cross-attention caches and synchronization between encoders and decoders are essential. NVIDIA refers to this processing as in-flight batching, which incorporates dual-paged cache strategies.

5) Which open-source engines support continuous batching today?

Both Hugging Face Text Generation Inference (TGI) and vLLM support principles of continuous batching and optimizations for KV caches. Continuous batching and streaming are highlighted as core features in TGI documentation, while vLLM implements PagedAttention and related memory strategies.

Conclusion

Continuous batching is more than just a single technique; it’s a collection of strategies that work harmoniously. KV caching enhances performance through reusability, chunked prefill allows memory control, and ragged batching with dynamic scheduling eliminates wasted padding. Together, they enable serving systems to integrate tasks from multiple requests continuously, delivering smooth, fast experiences to users.

Modern engines like TGI and vLLM encapsulate these ideas with practical features, making them adaptable for real-world workloads. Remember: always compute on actual tokens, never on padding. This principle is foundational to achieving efficiency at scale.

Thank You for Reading this Blog and See You Soon! 🙏 👋

Let's connect 🚀

Latest Insights

Deep dives into AI, Engineering, and the Future of Tech.

I Tried 5 AI Browsers So You Don’t Have To: Here’s What Actually Works in 2025

I explored 5 AI browsers—Chrome Gemini, Edge Copilot, ChatGPT Atlas, Comet, and Dia—to find out what works. Here are insights, advantages, and safety recommendations.

Read Article